From silos to collaboration: the significance of Web3 native data pipelines

Written by Jay : : FP

Compilation: Deep Tide TechFlow

The release of the Bitcoin white paper in 2008 sparked a rethinking of the concept of trust. Blockchain then expanded its definition to include the notion of a trustless system and rapidly evolved to argue that different types of value, such as individual sovereignty, financial democratization, and ownership, could be applied to existing systems. Of course, a lot of validation and discussion may be required before blockchain can be used in practice, because its characteristics may appear somewhat radical compared with various existing systems. However, if we are optimistic about these scenarios, building data pipelines and analyzing the valuable information contained in blockchain storage has the potential to become another important turning point in the development of the industry, because we can observe Web3 that never existed before. Native business intelligence.

This paper explores the potential of Web3-native data pipelines by projecting data pipelines commonly used in existing IT markets into a Web3 environment. The article discusses the benefits of these pipelines, the challenges that need to be addressed, and the impact of these pipelines on the industry.

1. Singularity comes from information innovation

“Language is one of the most important differences between humans and lower animals. It’s not just the ability to make sounds, but to associate definite sounds with definite thoughts, and to use those sounds as symbols for the communication of ideas.” — Darwin

Historically, major advances in human civilization have been accompanied by innovations in information sharing. Our ancestors used language, both spoken and written, to communicate with each other and pass knowledge on to future generations. This gives them a major advantage over other species. The invention of writing, paper, and printing made it possible to share information more widely, which led to major advances in science, technology, and culture. The metal movable type printing of the Gutenberg Bible in particular was a watershed moment as it made possible the mass production of books and other printed materials. This had a profound impact on the beginnings of the Reformation, the Democratic Revolution, and scientific progress.

The rapid development of IT technology in the 2000s allowed us to gain a deeper understanding of human behavior. This has led to a change in lifestyle where most people in modern times make various decisions based on digital information. It is for this reason that we refer to modern society as the “IT Innovation Era”.

Only 20 years after the full commercialization of the Internet, artificial intelligence technology has once again amazed the world. There are many applications that can replace human labor, and many people are discussing the civilization that AI will change. Some are even in denial, wondering how such a technology could emerge so quickly that it could shake the foundations of our society. Although there is “Moore’s Law” that shows that the performance of semiconductors will increase exponentially over time, the changes brought about by the emergence of GPT are too sudden to be faced immediately.

Interestingly, however, the GPT model itself is not actually a very groundbreaking architecture. On the other hand, the AI industry will list the following as the main success factors for GPT models: 1) Definition of business domains that can target large customer groups, and 2) Model tuning through data pipelines - from data acquisition to final results and results-based feedback of. In short, these applications enable innovation by refining service delivery purposes and upgrading data/information processing processes.

2. Data-driven decisions are everywhere

Most of what we call innovation is actually based on the manipulation of accumulated data, not chance or intuition. As the saying goes, “In a capitalist market, it is not the strong who survive, but the survivors who are strong”. Today’s businesses are highly competitive and the market is saturated. Hence, businesses are collecting and analyzing all kinds of data to grab even the smallest niche.

We may be too obsessed with Schumpeter’s theory of “creative destruction” and too much emphasis on making decisions based on intuition. However, even great intuition is ultimately the product of an individual’s accumulated data and information. The digital world will penetrate more deeply into our lives in the future, and more and more sensitive information will be presented in the form of digital data.

The Web3 market is getting a lot of attention for its potential to give users control over their data. However, the blockchain field, which is the basic technology of Web3, is currently more concerned with solving the trilemma (Deep Tide Note: Triangular Dilemma, that is, security, decentralization, and scalability issues). For new technologies to be convincing in the real world, it is important to develop applications and intelligence that can be used in multiple ways. We’ve seen this happen in the Big Data space, and methodologies for building Big Data processing and data pipelines have advanced significantly since around 2010. In the context of Web3, efforts must be made to move the industry forward and build data flow systems to generate data-based intelligence.

3. Opportunities based on data flow on the chain

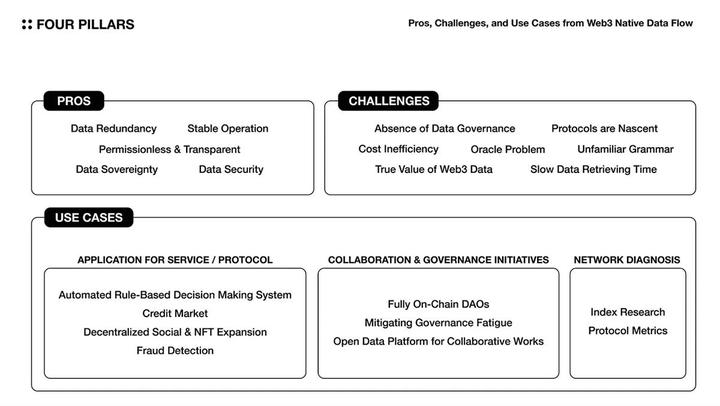

So, what opportunities can we capture from Web3-native streaming systems, and what challenges do we need to address to seize these opportunities?

3.1 Advantages

In short, the value of configuring Web3-native data streams is that reliable data can be safely and efficiently distributed to multiple entities so that valuable insights can be extracted.

- Data redundancy - on-chain data is less likely to be lost and more resilient because the protocol network stores data fragments on multiple nodes.

- Data Security - On-chain data is tamper-proof as it is verified and consensused by a network of decentralized nodes.

- Data sovereignty - Data sovereignty is the right of users to own and control their own data. With on-chain data streaming, users can see how their data is being used and choose to only share it with those who have a legitimate need to access it.

- Permissionless and transparent - on-chain data is transparent and tamper-proof. This ensures that the data being processed is also a reliable source of information.

- Stable operation - when the data flow is orchestrated by the protocol in a distributed environment, since there is no single point of failure, the probability of each layer being exposed to downtime is significantly reduced.

3.2 Application cases

Trust is the basis for different entities to interact with each other and make decisions. Therefore, when reliable data can be safely distributed, it means that many interactions and decisions can be made through Web3 services in which various entities participate. This helps maximize social capital, and we can imagine several use cases below.

3.2.1 Service/Protocol Application

Rules-Based Automated Decision System - Protocols use key parameters to run services. These parameters are adjusted regularly to stabilize the service status and provide users with the best experience. However, the protocol cannot always monitor the service status and make dynamic changes to parameters in a timely manner. This is what on-chain data flow does. On-chain data streams can be used to analyze service status in real-time and suggest the best set of parameters to match service requirements (e.g. applying an automatic floating rate mechanism for lending protocols).

- Credit Market Growth - Credit has traditionally been used in financial markets as a measure of an individual’s ability to repay. This helps to improve market efficiency. However, the definition of credit remains unclear in the Web3 market. This is due to the scarcity of personal data and lack of data governance across industries. Therefore, it becomes difficult to integrate and collect information. By building a process for collecting and processing fragmented data on-chain, it is possible to redefine the credit market in the Web3 market (for example, Spectral’s MACRO (multi-asset credit risk oracle) scoring).

- Decentralized social/NFT extensions - Decentralized societies prioritize user control, privacy protection, censorship resistance, and community governance. This provides an alternative social paradigm. Therefore, a pipeline can be established to control and update various metadata more smoothly and facilitate migration between platforms.

- Fraud detection - Web3 services using smart contracts are vulnerable to malicious attacks that can steal funds, compromise systems, and lead to decoupling and liquidity attacks. By creating a system that can detect these attacks in advance, Web3 services can develop rapid response plans and protect users from harm.

3.2.2 Collaboration and Governance Initiatives

- Fully on-chain DAOs - Decentralized Autonomous Organizations (DAOs) rely heavily on off-chain tools for efficient governance and public funding. By building an on-chain data processing process and creating a transparent process for DAO operations, the value of Web3’s native DAO can be further enhanced.

- Alleviate governance fatigue - Web3 protocol decisions are often made through community governance. However, there are many factors that can make it difficult for participants to participate in governance, such as geographic barriers, monitoring pressure, lack of expertise required for governance, randomly published governance agenda, and inconvenient user experience. A protocol governance framework could operate more efficiently and effectively if a tool could be created that simplifies the process for participants to go from understanding to actually implementing individual governance agenda items.

- Open data platforms for collaborative works - In existing academic and industrial circles, many data and research materials are not publicly disclosed, which can make the overall development of the market very inefficient. On the other hand, on-chain data pools can facilitate more collaborative initiatives than existing markets because they are transparent and accessible to anyone. The development of many token standards and DeFi solutions are good examples. Additionally, we may operate public data pools for various purposes.

3.2.3 Network Diagnosis

- Index Research - Web3 users create various indicators to analyze and compare the state of the protocol. Multiple objective metrics (e.g. Nakaflow’s Satoshi coefficient) can be studied and displayed in real time.

- Protocol Metrics - By crunching data such as the number of active addresses, the number of transactions, asset inflow/outflow, and fees incurred by the network, the performance of the protocol can be analyzed. This information can be used to assess the impact of specific protocol updates, the status of MEVs, and the health of the network.

3.3 Challenges

On-chain data has unique advantages that can increase industry value. However, to fully realize these benefits, many challenges must be addressed both within and outside the industry.

- Lack of Data Governance - Data Governance is the process of establishing consistent and shared data policies and standards to facilitate the integration of every data primitive. Currently, each on-chain protocol establishes its own standards and retrieves its own data types. The problem, however, is the lack of data governance between the entities that aggregate these protocol data and provide API services to users. This makes integration between services difficult, and as a result, it is difficult for users to obtain reliable and comprehensive insights.

- Cost inefficiency - Storing cold data in the protocol saves users data security and server costs. However, if the data needs to be accessed frequently for analysis or requires significant computing resources, it may not be cost-effective to store it on the blockchain.

- The oracle problem - smart contracts are only fully functional when they have access to data from the real world. However, these data are not always reliable or consistent. Unlike blockchains, which maintain integrity through consensus algorithms, external data is not deterministic. Oracle solutions must evolve to ensure external data integrity, quality, and scalability independent of a specific application layer.

- The protocol is in its infancy - the protocol uses its own token to incentivize users to keep the service running and pay for it. However, the parameters required to operate the protocol (e.g., the precise definition and incentive scheme of service users) are often naively managed. This means that the economic sustainability of the protocol is difficult to verify. If many protocols connect organically and create data pipelines, there will be greater uncertainty about whether the pipelines will work well.

- Slow data retrieval time - Protocols typically process transactions through consensus of many nodes, which limits the speed and volume of information processing compared to traditional IT business logic. This bottleneck is difficult to resolve unless the performance of all the protocols that make up the pipeline is significantly improved.

- The real value of Web3 data - blockchains are isolated systems that are not yet connected to the real world. When collecting Web3 data, we need to consider whether the collected data can provide meaningful insights enough to cover the cost of building the data pipeline.

- Unfamiliar syntax - Existing IT data infrastructure and blockchain infrastructure operate very differently. Even the programming language used is different, and blockchain infrastructure often uses low-level languages or new languages designed specifically for blockchain needs. This makes it difficult for new developers and service users to learn how to deal with each data primitive, as they need to learn a new programming language or a new way of thinking about working with blockchain data.

4. Pipelined Web3 Data Lego

There are no connections between current Web3 data primitives, they extract and process data independently. This makes it difficult to experiment with synergies in information processing. To address this issue, this paper introduces a data pipeline commonly used in the IT market and maps existing Web3 data primitives onto this pipeline. This will make the use case more specific.

4.1 General Data Pipeline

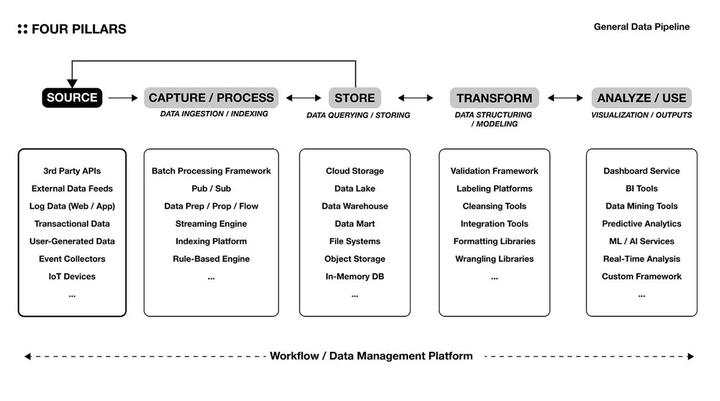

Building a data pipeline is like the process of conceptualizing and automating repetitive decision-making processes in everyday life. By doing so, information of a specific quality is readily available and used for decision-making. The more unstructured data to process, the more frequently the information is used, or the more real-time analysis is required, the time and cost of gaining the proactiveness needed for future decisions can be saved by automating these processes.

The diagram above shows a common architecture for building data pipelines in the existing IT infrastructure market. Data suitable for analytical purposes is collected from the correct data source and stored in an appropriate storage solution according to the nature of the data and the analytical requirements. For example, data lakes provide raw data storage solutions for scalable and flexible analysis, while data warehouses focus on storing structured data for query and analysis optimized for specific business logic. The data is then processed into insight or useful information in various ways.

Each solution level is also available as a packaged service. There is also increasing interest in ETL (Extract, Transform, Load) SaaS product groups that connect the chain of processes from data extraction to loading (eg FiveTran, Panoply, Hivo, Rivery). The sequence is not always unidirectional, and the layers can be connected to each other in a variety of ways, depending on the specific needs of the organization. The most important thing when building a data pipeline is to minimize the risk of data loss that can occur as data is sent and received to each server tier. This can be achieved by optimizing the decoupling of servers and using reliable data storage and processing solutions.

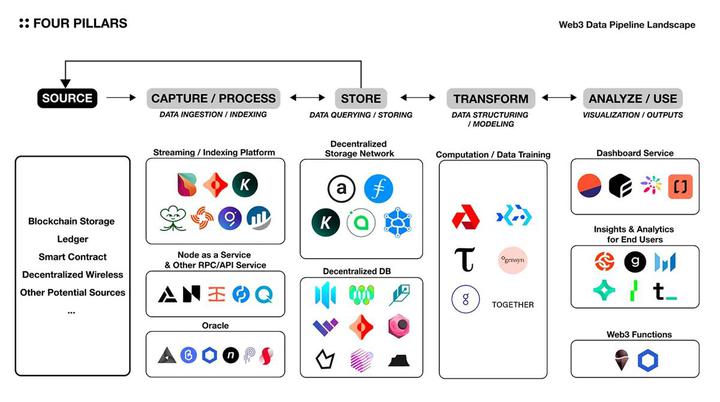

4.2 Pipeline with on-chain environment

The conceptual diagram of the data pipeline introduced earlier can be applied to the on-chain environment, as shown in the above figure, but it should be noted that a completely decentralized pipeline cannot be formed, because each basic component depends to some extent on the Centralized off-chain solution. In addition, the above figure does not currently include all Web3 solutions, and the boundaries of classification may be blurred—for example, KYVE, in addition to serving as a streaming media platform, also includes the function of a data lake, which can be regarded as a data pipeline itself. Also, Space and Time is classified as a decentralized database, but it offers API gateway services such as RestAPI and streaming, as well as ETL services.

4.2.1 Capture/Processing

In order for common users or dApps to efficiently consume/operate services, they need to be able to easily identify and access data sources primarily generated within the protocol, such as transactions, state, and log events. This layer is where a middleware comes into play, helping with processes including oracles, messaging, authentication, and API management. The main solutions are as follows.

Streaming/Indexing Platform

Bitquery, Ceramic, KYVE, Lens, Streamr Network, The Graph, block explorers of various protocols, etc.

node-as-a-service and other RPC/API services

Alchemy、All that Node、Infura、Pocket Network、Quicknode 等。

Oracle

API 3, Band Protocol, Chainlink, Nest Protocol, Pyth, Supra oracles, etc.

4.2.2 Storage

Compared with Web2 storage solutions, Web3 storage solutions have several advantages such as persistence and decentralization. However, they also have some disadvantages, such as high cost, difficulty in data update and query. As a result, various solutions have emerged to address these shortcomings and enable efficient processing of structured and dynamic data on Web3 - each with different characteristics such as the type of data processed, whether it is structured and whether it is With embedded query function and so on.

Decentralized storage network

Arweave、Filecoin、KYVE、Sia、Storj etc.

Decentralized database

Arweave-based databases (Glacier, HollowDB, Kwil, WeaveDB), ComposeDB, OrbitDB, Polybase, Space and Time, Tableland, etc.

* Each protocol has a different permanent storage mechanism. For example, Arweave is a blockchain-based model, similar to Ethereum storage, storing data permanently on-chain, while Filecoin, Sia, and Storj are contract-based models, storing data off-chain.

4.2.3 Conversion

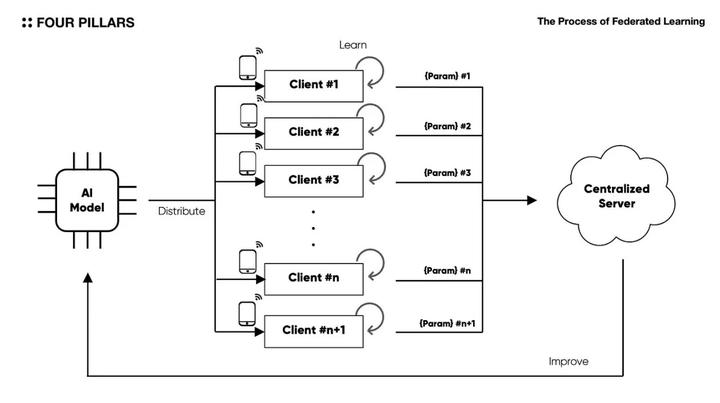

In the context of Web3, the translation layer is as important as the storage layer. This is because the structure of the blockchain basically consists of a distributed collection of nodes, which makes it easy to use scalable backend logic. In the AI industry, people are actively exploring the use of these advantages for research in the field of federated learning, and protocols dedicated to machine learning and AI operations have emerged.

Data training/modeling/calculation

Akash、Bacalhau、Bitensor、Gensyn、Golem、Together 等。

* Federated learning is a method for training artificial intelligence models by distributing the original model on multiple native clients, using stored data to train it, and then collecting the learned parameters on a central server.

4.2.4 Analysis/Use

The dashboard services and end-user insights and analytics solutions listed below are platforms that allow users to observe and discover various insights from specific protocols. Some of these solutions also provide API services to the end product. However, it is important to note that the data in these solutions is not always accurate as they mostly use separate off-chain tools to store and process the data. Errors between solutions can also be observed.

At the same time, there is a platform called “Web3 Functions” that can automatically/trigger the execution of smart contracts, just like centralized platforms such as Google Cloud trigger/execute specific business logic. Using this platform, users can implement business logic in a Web3-native way, instead of just processing on-chain data to gain insights.

Dashboard Services

Dune Analytics、Flipside Crypto、Footprint、Transpose 等。

End User Insights and Analysis

Chainalaysis、Glassnode、Messari、Nansen、The Tie、Token Terminal etc.

Web3 Functions

Chainlink’s Functions, Gelato Network, etc.

5. Concluding Thoughts

As Kant said, we can only witness the appearance of things, but not their essence. Nevertheless, we use records of observations called “data” to process information and knowledge, and we see how innovations in information technology drive the development of civilization. Therefore, building a data pipeline in the Web3 marketplace, in addition to being decentralized, can play a key role as a starting point to actually capture these opportunities. I would like to conclude this article with a few thoughts.

5.1 The role of storage solutions will become more important

The most important prerequisite to having a data pipeline is to establish data and API governance. In an increasingly diverse ecosystem, the specifications created by each protocol will continue to be recreated, and fragmented transaction records through multi-chain ecosystems will make it more difficult for individuals to derive comprehensive insights. Then, “storage solutions” are entities that can provide integrated data in a unified format by collecting fragmented information and updating the specifications of each protocol. We observe that existing market storage solutions such as Snowflake and Databricks are growing rapidly, have large customer bases, are vertically integrated by operating at various levels in the pipeline, and are leading the industry.

5.2 Opportunities in the Data Sources Market

Successful use cases began to emerge when data became more accessible and processing improved. This creates a positive circular effect where data sources and collection tools explode—since 2010, the types and volumes of digital data collected each year have grown exponentially since 2010, thanks to enormous advances in technology for building data pipelines. Applying this background to the Web3 market, many data sources can be recursively generated on-chain in the future. This also means that blockchain will expand into various business fields. At this point, we can expect data acquisition to advance through data marketplaces like Ocean Protocol or DeWi (decentralized wireless) solutions like Helium and XNET, as well as storage solutions.

5.3 What matters is meaningful data and analysis

However, the most important thing is to keep asking what data should be prepared to extract the insights that are really needed. There is nothing more wasteful than building a data pipeline for the sake of building a data pipeline without explicit assumptions to validate. Existing markets have achieved numerous innovations through building data pipelines, but have also paid a countless price through repeated pointless failures. It is also good to have constructive discussions about the development of the technology stack, but the industry needs time to think and discuss more fundamental issues, such as what data should be stored in the block space, or what purpose the data should be used for. The “goal” should be to realize the value of Web3 through actionable intelligence and use cases, and in this process, developing multiple basic components and completing the pipeline are the “means” to achieve this goal.