Mihayou and Fudan released, with perception, brain, action of the large language model "agent"

Original source: AIGC Open Community

Image source: Generated by Unbounded AI

Image source: Generated by Unbounded AI

Large language models such as ChatGPT demonstrate unprecedented creative capabilities, but they are still far from AGI (General Artificial Intelligence), and lack anthropomorphic capabilities such as autonomous decision-making, memory storage, and planning.

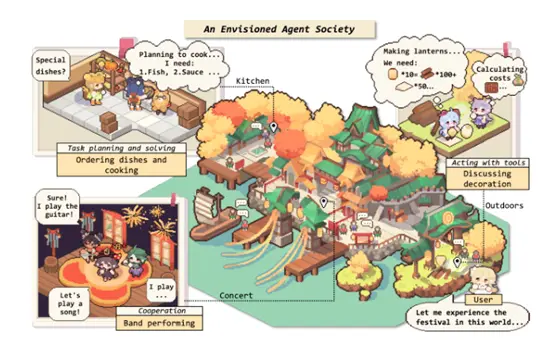

In order to explore the evolution of large language models to AGI and evolve into super artificial intelligence that surpasses humans, Mihayou and Fudan NLP research team jointly released an “agent” paper based on large language models. Put agents with the three functions of perception, brain and action in experimental environments such as text and sandbox games to let them move on their own.

The results show that these agents have anthropomorphic capabilities such as autonomous perception, planning, decision-making and communication, for example, when the surrounding environment becomes difficult and arduous, the agents will automatically adjust their strategies and actions; In a social simulation environment, the agent exhibits anthropomorphic emotions such as empathy; When two strange agents communicate simply, they remember each other.

This technical framework is similar to the AI agent game simulation experiments released by Stanford University and Tsinghua University before, which are based on large language models to build more powerful AI robots, which has played a role in promoting the development of the industry.

Paper Address:

Github:

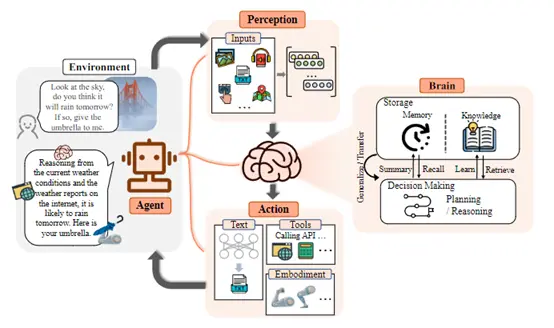

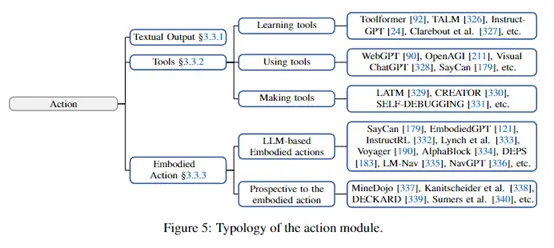

According to the paper, the agent is mainly composed of three modules: perception, decision-making and control, and execution, which perceives the environment, makes intelligent decisions and then performs specific actions.

According to the paper, the agent is mainly composed of three modules: perception, decision-making and control, and execution, which perceives the environment, makes intelligent decisions and then performs specific actions.

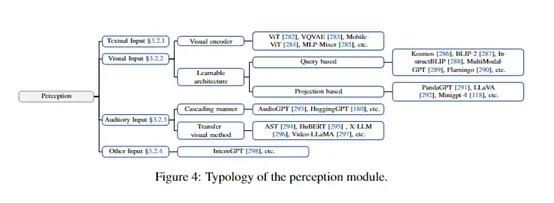

Perception Module

The perception module is used to obtain various information from the environment, equivalent to human senses. It can contain a variety of sensors to obtain different types of data, for example, the camera obtains image information, the microphone obtains voice information, etc.

The perception module preprocesses this raw data and converts it into a digital representation that the agent can understand for subsequent modules. Commonly used perception sensors include:

Image sensors: cameras, RGB-D cameras, etc., used to obtain visual information.

Sound sensor: microphone, get audio information such as voice and ambient sound.

Position sensors: GPS, INS (inertial navigation system), etc., to know the position of the agent itself.

Tactile sensors: Haptic ARRAY, tactile gloves, etc., to obtain tactile feedback when objects come into contact.

Temperature, humidity, air pressure and other environmental sensors to obtain environmental parameter information.

The perception module needs to preprocess the raw data, for example, image denoising, sound noise reduction, format conversion, etc., to generate normalized data that can be used by subsequent modules. At the same time, the perception module can also perform feature extraction, such as extracting visual features such as edges, textures, and target areas from images.

The perception module needs to preprocess the raw data, for example, image denoising, sound noise reduction, format conversion, etc., to generate normalized data that can be used by subsequent modules. At the same time, the perception module can also perform feature extraction, such as extracting visual features such as edges, textures, and target areas from images.

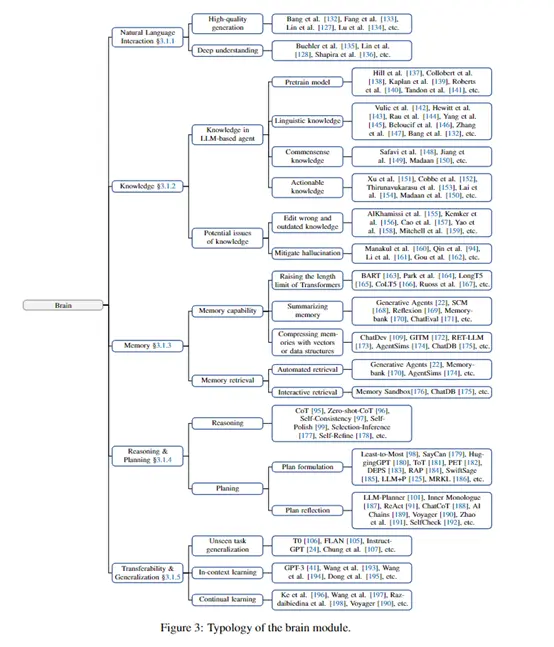

Decision and Control Module**

This module is the “brain” of the agent, processing, analyzing and making corresponding decisions on the data obtained by the perception module. It can be subdivided into the following submodules:

Knowledge base/memory: stores all kinds of prior knowledge, experience, as well as observations, experiences and other information during execution.

Reasoning/planning: Analyze the current environment and develop a course of action according to the target task. Such as path planning, action sequence planning, etc.

Decision-making: Making optimal decisions based on the current state of the environment, knowledge, and reasoning results.

Control: Convert the decision result into control instructions and issue execution commands to the execution module.

The design of the decision and control module is the key to agent technology. Early use of logic and rule-based symbolic methods, deep learning techniques have become mainstream in recent years. The input of the module is the various types of data obtained by perception, and the output is the control instruction of the execution module.

## Execution Module

## Execution Module

The execution module receives control instructions and translates them into specific environmental interaction behaviors to achieve the corresponding task. It is equivalent to the “limbs” of a person. The actuator connects to the agent’s “effector” and drives the effector to change the environment according to the control command. The main effectors include:

Motion actuators: robotic arms, robot chassis, etc., to change the position of the agent itself or perform object operations.

Speech/text output: Speech synthesizers, displays, etc. to interact with the environment in speech or text.

Tool/equipment operation interface: control various devices and tools, and expand the environmental operation capability of the agent.

The specific design of the execution module is related to the physical form of the agent. For example, a service agent only needs a text or voice interface, while a robot needs to connect and precisely control the kinematics. Accuracy and resiliency of execution are key to mission success.

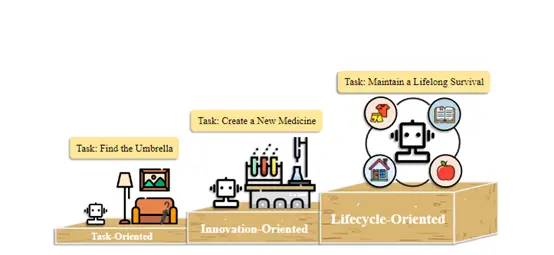

In the test experiment, the researchers mainly carried out three types of experiments: task, innovation and life cycle management to observe the performance of the agent in different environments.

In the test experiment, the researchers mainly carried out three types of experiments: task, innovation and life cycle management to observe the performance of the agent in different environments.

Task Experiment

The researchers built two simulation environments, text games and life scenarios, to test the ability of agents to complete daily tasks. Text play environments use natural language to describe the virtual world, and agents need to read text descriptions to perceive their surroundings and take action.

Life scene simulations are more realistic and complex, and agents need to use common sense knowledge to better understand commands, such as actively turning on lights when the room is dark.

Experimental results show that agents can use their powerful text comprehension generation capabilities to effectively decompose complex tasks, make plans, and interact with dynamically changing environments in these simulated environments to ultimately accomplish predetermined goals.

Experimental results show that agents can use their powerful text comprehension generation capabilities to effectively decompose complex tasks, make plans, and interact with dynamically changing environments in these simulated environments to ultimately accomplish predetermined goals.

Innovative Experiment

The researchers explored the potential of agents in specialized fields such as scientific innovation. Due to the challenges of data scarcity and difficulty in understanding specialized domain knowledge in these fields, the researchers tested solutions to equip agents with various general or specialized tools to improve their understanding of complex domain knowledge.

Experiments show that the agent can use search engines, knowledge graphs and other tools to conduct online research, and interface with scientific instruments and equipment to complete practical operations such as material synthesis. This makes it a promising assistant to scientific innovation.

Experiments show that the agent can use search engines, knowledge graphs and other tools to conduct online research, and interface with scientific instruments and equipment to complete practical operations such as material synthesis. This makes it a promising assistant to scientific innovation.

Life Cycle Experiment

The researchers used the open-world game Minecraft to test the agent’s ability to continuously learn and survive. Agents start with the most basic activities such as mining wood and crafting workbenches, gradually exploring unknown environments and acquiring more complex survival skills.

In the experiment, the intelligent body is used for high-level planning and can continuously adjust the strategy according to environmental feedback**. The results show that the agent can develop skills under complete autonomy, continuously adapt to new environments, and demonstrate strong life cycle management capabilities.

In the experiment, the intelligent body is used for high-level planning and can continuously adjust the strategy according to environmental feedback**. The results show that the agent can develop skills under complete autonomy, continuously adapt to new environments, and demonstrate strong life cycle management capabilities.

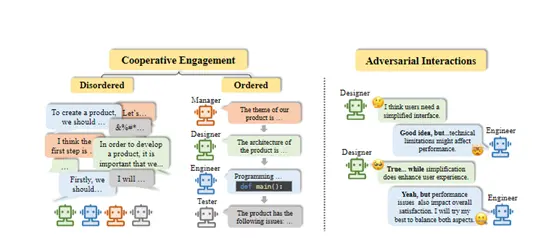

In addition, in terms of social simulation, the researchers explored whether agents exhibit personality and social behavior, and tested different environmental settings. The results show that agents can exhibit certain levels of cognitive abilities, emotions, and personality traits. In a simulated society, spontaneous social activities and group behavior occur between agents.