What are the popular Chinese researchers at OpenAI, Google and Meta thinking | Transcript of the conversation

Original source: Silicon Star People

Image source: Generated by Unbounded AI

Image source: Generated by Unbounded AI

The seats were packed and the aisles were full of people.

You might even think it was a celebrity meeting.

But this is actually one of the roundtables at the GenAI conference in Silicon Valley.

It was arranged on the “auxiliary stage” at noon when people are most sleepy. There were many CEOs and founders of Silicon Valley star companies sitting on the stage in another large conference room, and this round table was “just” some researchers. , but people still kept pouring into the small room.

Their target was three Chinese researchers. In the past, in Silicon Valley, this kind of scene always happened when “Chinese executives with the highest positions in Silicon Valley companies” appeared, but this time, people were chasing three young people.

Xinyun Chen, Chunting Zhou and Jason Wei.

**Young Chinese researchers at three of the most important star AI companies in Silicon Valley. **

**Young Chinese researchers at three of the most important star AI companies in Silicon Valley. **

These three names will definitely be familiar to people who closely follow the trend of large models.

Xinyun Chen is a senior research scientist on the Google Brain and DeepMind inference teams. Her research interests are neural program synthesis and adversarial machine learning. She received a PhD in computer science from the University of California, Berkeley, and a bachelor’s degree in computer science from the ACM class at Shanghai Jiao Tong University.

She participated in papers including allowing LLM to create its own tools and teaching LLM to debug its own code, etc. These are all very important and critical papers in the field of AI code generation. She has also been exaggeratedly described by some media as a member of the “Google Deepmind Chinese Team”.

Chunting Zhou is a research scientist at Meta AI. In May 2022, she received her PhD from the Institute of Language Technology at Carnegie Mellon University. Her current main research interests lie in the intersection of natural language processing and machine learning, as well as new methods of alignment. The paper she led, which tried to use fewer and more refined samples to train large models, was greatly praised by Yann Lecun and recommended in a paper. The paper provided the industry with newer ideas in addition to mainstream methods such as RLHF.

The last one is Jason Wei of OpenAI, a star researcher highly respected by the domestic and foreign AI communities. The famous COT (Chain of Thoughts) developer. After graduating from his undergraduate degree in 2020, he became a senior researcher at Google Brain. During his tenure, he proposed the concept of thinking chains, which is also one of the keys to the emergence of LLM. In February 2023, he joined OpenAI and joined the ChatGPT team.

People come to these companies, but more to their research.

Many times in this forum, they are like students. You seem to be watching a university discussion. They are smart minds, quick-response logic, slightly nervous, but also full of witty words.

“Why do you have to think hallucinations are a bad thing?”

“But Trump is hallucinating every day.”

There was laughter.

This is a rare conversation. The following is the transcript. Silicon Star people also participated and asked questions.

Question: Let’s discuss a very important issue in LLM, which is hallucination. The concept of hallucination was proposed as early as when the model parameters were very few and the size was still very small. But now as the models become larger and larger, how has the problem of hallucination changed?

Chunting: I can talk first. I did a project three years ago about hallucinations. The hallucination problem we faced at that time was very different from what we face now. At that time, we made very small models, and discussed hallucinations in specific fields, such as translation or document summary and other functions. But now it’s clear the problem is much larger.

I think there are many reasons why large models still produce hallucinations. First of all, in terms of training data, because humans have hallucinations, there are also problems with the data. The second reason is that because of the way the model is trained, it cannot answer real-time questions, and it will answer wrong questions. As well as deficiencies in reasoning and other abilities can lead to this problem.

Xinyun:** Actually I will start this answer with another question. Why humans think hallucinations are a bad thing. **

I have a story where my colleague asked the model a question, which was also taken from some assessment question banks: What will happen when the princess kisses the frog. The model’s answer is that nothing happens. **

In many model evaluation answers, the answer “will become a prince” is the correct answer, and the answer that nothing will happen will be marked as wrong. **But for me, I actually think this is a better answer, and a lot of interesting humans would answer this. **

The reason why people think this is an illusion is because they have not thought about when AI should not have hallucinations and when AI should have hallucinations.

For example, some creative work may require it, and imagination is very important. Now we are constantly making the model bigger, but one problem here is that no matter how big it is, it cannot accurately remember everything. Humans actually have the same problem. I think one thing that can be done is to provide some enhanced tools to assist the model, such as search, calculation, programming tools, etc. Humans can quickly solve the problem of hallucinations with the help of these tools, but the models don’t look very good yet. This is also a question that I would like to study myself.

Jason: **If you ask me, Trump is hallucinating every day. (Laughs) You say yes or no. **

But I think another problem here is that people’s expectations of language models are changing. **In 2016, when an RNN generates a URL, your expectation is that it must be wrong and untrustworthy. But today, I guess you would expect the model to be correct about a lot of things, so you would also think that hallucinations are more dangerous. So this is actually a very important background. **

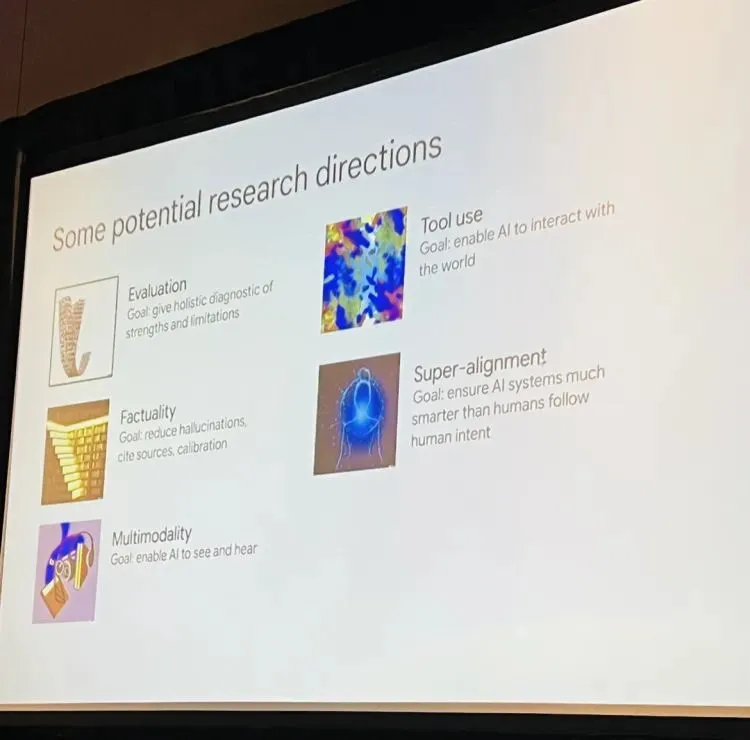

(Potential research directions listed by Jason Wei)

(Potential research directions listed by Jason Wei)

Ask: The next question is for Xinyun. A very important topic in the industry now is model self-improvement and self-debugging, for example. Can you share your research?

Xinyun: The inspiration for model self-debugging actually comes from how humans program. We know that if human programming ends once, there will definitely be problems and debugging will be necessary. For very powerful programmers, debugging is also a very important skill. Our goal is that without any external instructions and without humans telling it what is wrong, the model can look at the code it generated by itself, see the results of the operation, and then determine what went wrong. If there is a problem, go and debug it.

And why code generation will be helped by self-debugging, I think there are two reasons. First, code generation is basically based on open source code training. It can generate code that fits the general direction you want, but the code may be very long, contain many errors, and cannot be run. But we don’t need to start programming from scratch instead of using the existing code base, because no matter how many times you start from scratch, the problem is unavoidable, so it is necessary to do code generation on existing code resources, and debugging is become important. **Second, the debugging process continues to receive some external feedback, which is very helpful for improving the understanding of the model.

Q: A follow-up question is, if you leave the model to itself and let it improve itself, will there be no problems?

Chunting: We once did a strange experiment. As a result, the agent deleted the python development environment after executing the code. If this agent enters the real world, it may have a bad impact. This is something we need to consider when developing agents. I also found that the smaller the basic model, the smaller the ability, and it is difficult to improve and reflect on oneself. Maybe we can teach the model to improve itself by letting it see more “errors” during the alignment process.

Q: What about Jason, how do you do and what do you think about evaluating models.

Jason: My personal opinion is that evaluating models is increasingly challenging, especially under the new paradigm. There are many reasons behind this. One is that language models are now used in countless tasks, and you don’t even know the scope of its capabilities. The second reason is that if you look at the history of AI, we are mainly solving traditional and classic problems. The goals are very short-term and the text is very short. But now the solution text is longer, and even humans take a long time to judge. Perhaps the third challenge is that for many things, the so-called correct behavior is not very clearly defined. **

I think there are some things we can do to improve assessment capabilities. The first and most obvious one is to evaluate from a broader scope. When encountering some harmful behaviors, whether it can be more specifically broken down into smaller tasks for evaluation. Another question is whether more evaluation methods can be given for specific tasks. Maybe humans can give some, and then AI can also give some.

Q: What do you think about using AI to evaluate the route of AI?

Jason: It sounds great. I think one of the trends that I’m looking at lately is whether the models used to evaluate models can perform better. For example, the idea of constitutional AI training, even if the performance is not perfect now, it is very likely that after the next generation of GPT, these models will perform better than humans.

**Silicon Star: You are all very young researchers. I would like to know what you, as researchers in the enterprise, think about the serious mismatch in GPU and computing power between enterprises and academia. **

Jason: If you work in some constrained environment, it may indeed have a negative impact, but I think there is still room for a lot of work, such as the algorithm part, and research that may not require GPUs very much. There is never a shortage of topics.

Chunting: I also feel that there is a lot of space and places worth exploring. For example, research on alignment methods can actually be conducted with limited resources**. And maybe in the Bay Area, there are more opportunities for people in academia.

Xinyun: In general, there are two general directions for LLM research, one is to improve the result performance, and the other is to understand the model. We see that many good frameworks, benchmarks, etc., as well as some good algorithms come from academia.

For example, when I graduated from my Ph.D., my supervisor gave me a suggestion - **AI researchers should think about research in the time dimension of many years in the future, that is, not just consider improvements to some current things. , but a technological concept that may bring about radical changes in the future. **