Founder of DeepMind: AI will keep humans away from psychological problems, and US$1.3 billion in GPU computing power will create the most powerful personal assistant

Original source: Xinzhiyuan

Image source: Generated by Unbounded AI

Image source: Generated by Unbounded AI

Suleyman, co-founder of DeepMind and founder of Inflection AI, said in his new book “The Coming Wave” that AI will keep humans away from psychological problems in the future!

He further explained: “I think we haven’t really recognized the impact of family. Because whether you are rich or poor, no matter what ethnic background you come from, no matter what your gender is, a family that is kind and supportive is a Huge motivation.”

He further explained: “I think we haven’t really recognized the impact of family. Because whether you are rich or poor, no matter what ethnic background you come from, no matter what your gender is, a family that is kind and supportive is a Huge motivation.”

"I think we’re in a new phase in the development of artificial intelligence where we have ways to provide support, encouragement, affirmation, guidance and advice [to everyone]. We have refined emotional intelligence. I think that will free up millions of people creativity that people didn’t have access to before.”

The reason why Suleyman makes this conclusion may be related to his own experience:

He was born in North London in 1984 to a Syrian father and a British mother. He grew up in poverty, and when he was 16, his parents separated and both emigrated, leaving him and his younger brother to fend for themselves.

He was later admitted to Oxford University to study philosophy and theology, but dropped out after a year.

“I opened a smoothie stand in Camden Town when I was at Oxford. Because I was broke, I had to keep making money over the summer. I was also doing charity.”

“I opened a smoothie stand in Camden Town when I was at Oxford. Because I was broke, I had to keep making money over the summer. I was also doing charity.”

The charity he was talking about was helping a friend set up a Muslim youth helpline, hoping to provide counseling and psychological support to young Muslims in a uniquely Muslim way.

Now 39, he still has no contact with his father and lives alone in California. When asked about what he hopes artificial intelligence can provide, he responded:

“Increase the upper limit of your abilities and change your perception and evaluation of yourself.”

Suleyman’s statement is by no means a fantasy. Inflection AI, which he founded, aims to develop an all-around personal assistant that can solve almost all problems that everyone may encounter in life.

Inflection AI just raised more than US$1.3 billion in August, with a valuation of more than US$4 billion, led by Google, Nvidia, Bill Gates and others.

AI has stronger emotional perception than humans and can be used as an emotional therapy tool

At the same time, psychologists’ research also provides support for Suleyman’s statement: chatbots have higher emotional cognition than humans.

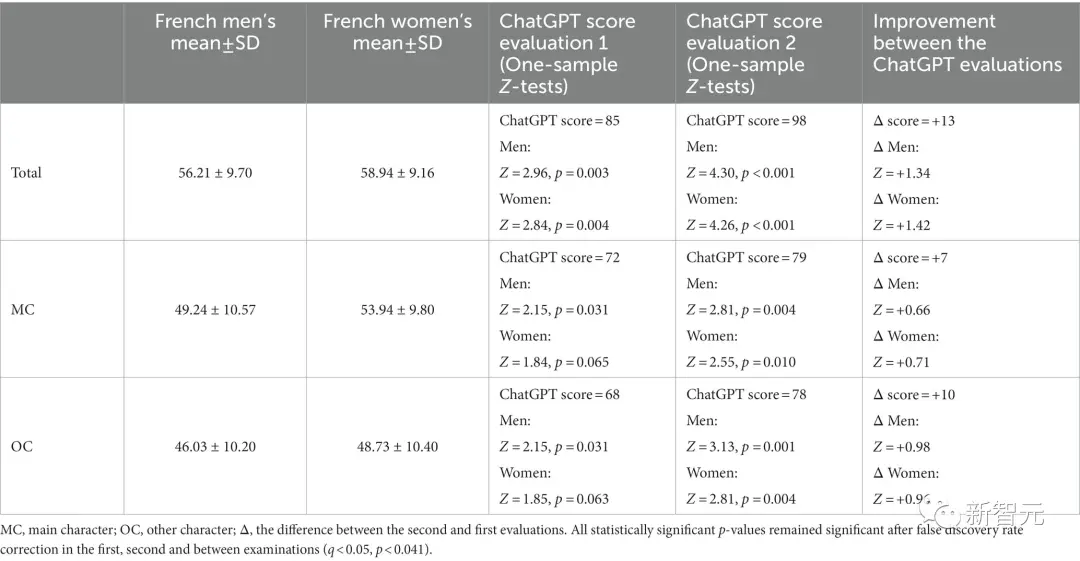

This test is designed to score the empathy shown by humans in different scenarios. Test subjects were given detailed descriptions of 20 emotional situations, such as a funeral, career success, or insult, and described the emotions they might feel in that situation.

The more detailed and understandable the emotion description, the higher the Level of Emotional Awareness Scale (LEAS) score.

The researchers evaluated ChatGPT’s responses using the same criteria as human responses and compared the results to a previous study in French people aged 17 to 84 years (n = 750).

In the two tests conducted, ChatGPT achieved high scores of 85 and 98, while human performance was completely overwhelmed by AI. Men scored 56 and women scored 59, not even passing.

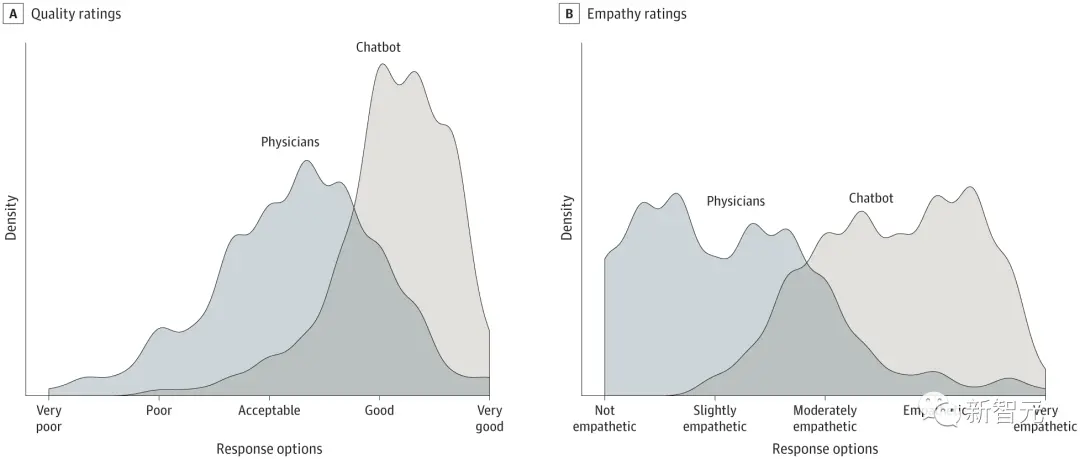

Another study published in JAMA Internal Medicine in April showed that ChatGPT surpassed doctors in quality and empathy when answering online questions.

Another study published in JAMA Internal Medicine in April showed that ChatGPT surpassed doctors in quality and empathy when answering online questions.

The study compared ChatGPT to doctors’ performance in answering patient questions on Reddit’s r/AskDocs forum.

The study compared ChatGPT to doctors’ performance in answering patient questions on Reddit’s r/AskDocs forum.

The “cross-sectional study” involved 195 randomly selected questions and found that the chatbot’s answers were more popular than those from doctors. ChatGPT received higher ratings for both quality and empathy.

An artificial intelligence assistant can help draft responses to patient questions, which could benefit both clinicians and patients, the researchers wrote.

An artificial intelligence assistant can help draft responses to patient questions, which could benefit both clinicians and patients, the researchers wrote.

This technology needs to be further explored in clinical settings, including the use of chatbots to draft responses for physician editing.

Randomized trials could evaluate the potential of AI assistants to improve responses, reduce clinician burnout, and improve patient outcomes.

Although on the surface, both studies are still imperfect, and the researchers do not believe that AI chatbots will replace psychologists to directly treat patients in the future based on the study results.

But both findings point out that AI chatbots can provide humans with mental health help that no other tool can match.

It can be said that compared to other productivity applications, large language models seem to be inherently more suitable for emotional understanding and communication. After all, language is the most important carrier for conveying feelings between humans.

Can Inflection AI’s products solve psychological problems

Since Suleyman is so optimistic about the potential of AI in solving psychological problems, how does his own product perform in this regard?

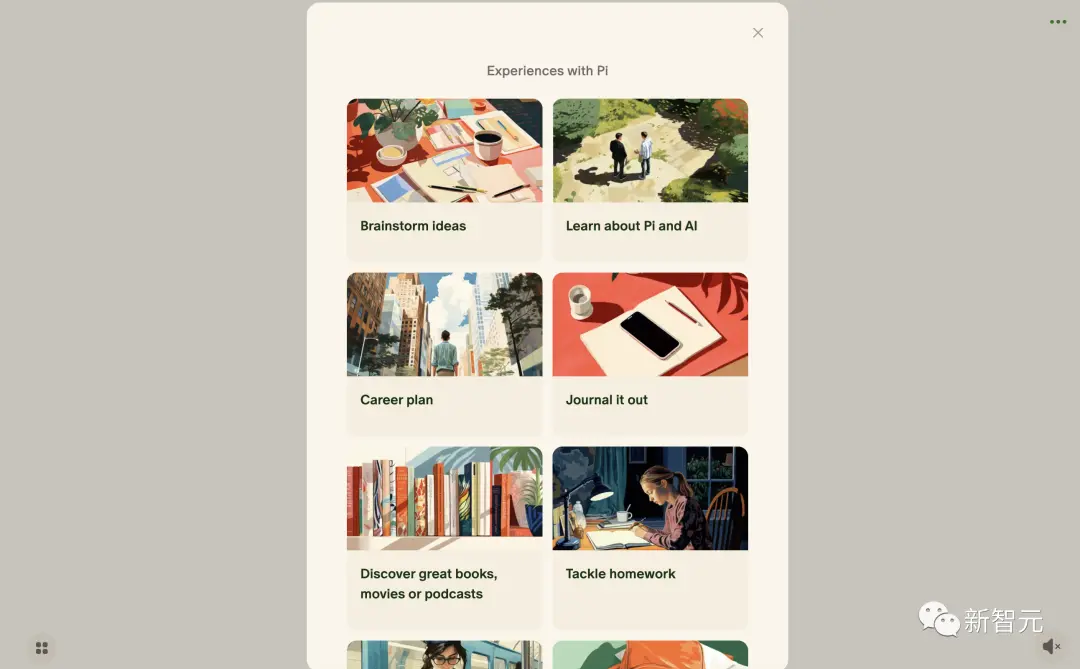

The personal assistant “Pi” launched by Inflection AI, which he founded, has been online for several months. Now let’s try to see how it can help users as a personal assistant.

Log in directly to his web version. Although the product has been online for a while, the page is still very simple.

Log in directly to his web version. Although the product has been online for a while, the page is still very simple.

According to what Suleyman revealed in the interview, their current version of the chatbot is still in its early stages, and a large number of GPUs purchased after financing are working overtime to train their latest model.

Enter Pi’s chat page and click on the square in the lower left corner to see several common scenarios officially prepared for users.

Enter Pi’s chat page and click on the square in the lower left corner to see several common scenarios officially prepared for users.

Each scenario is equivalent to a customized instruction. After selecting one, a working environment will be automatically set for the chatbot.

The chatbot will also give the user a starting prompt for each scenario. For example, after I select “motive myself”, the system will prompt me how to start the chat.

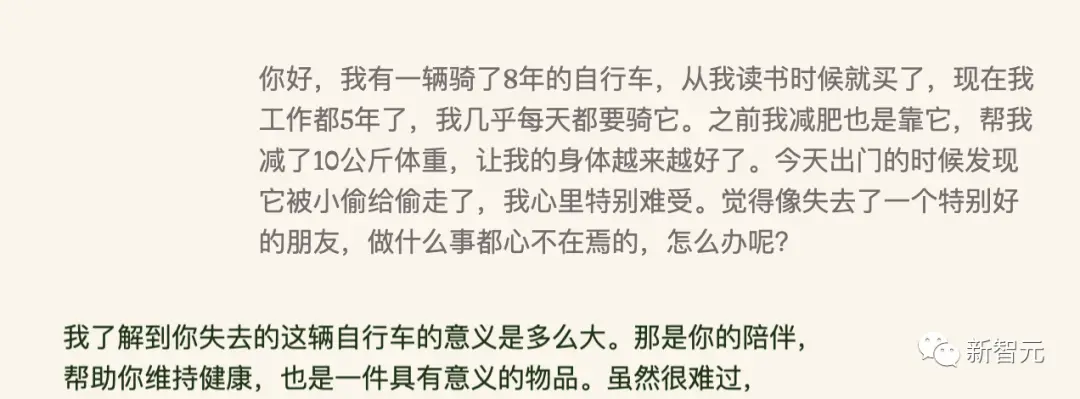

First, the editor tried its Chinese capabilities and set up a scene for him in Chinese to ask him to comfort me.

First, the editor tried its Chinese capabilities and set up a scene for him in Chinese to ask him to comfort me.

It seems that Pi can understand Chinese, but it is really not very good at expressing Chinese. Every time it is cut off in the middle of a sentence.

It seems that Pi can understand Chinese, but it is really not very good at expressing Chinese. Every time it is cut off in the middle of a sentence.

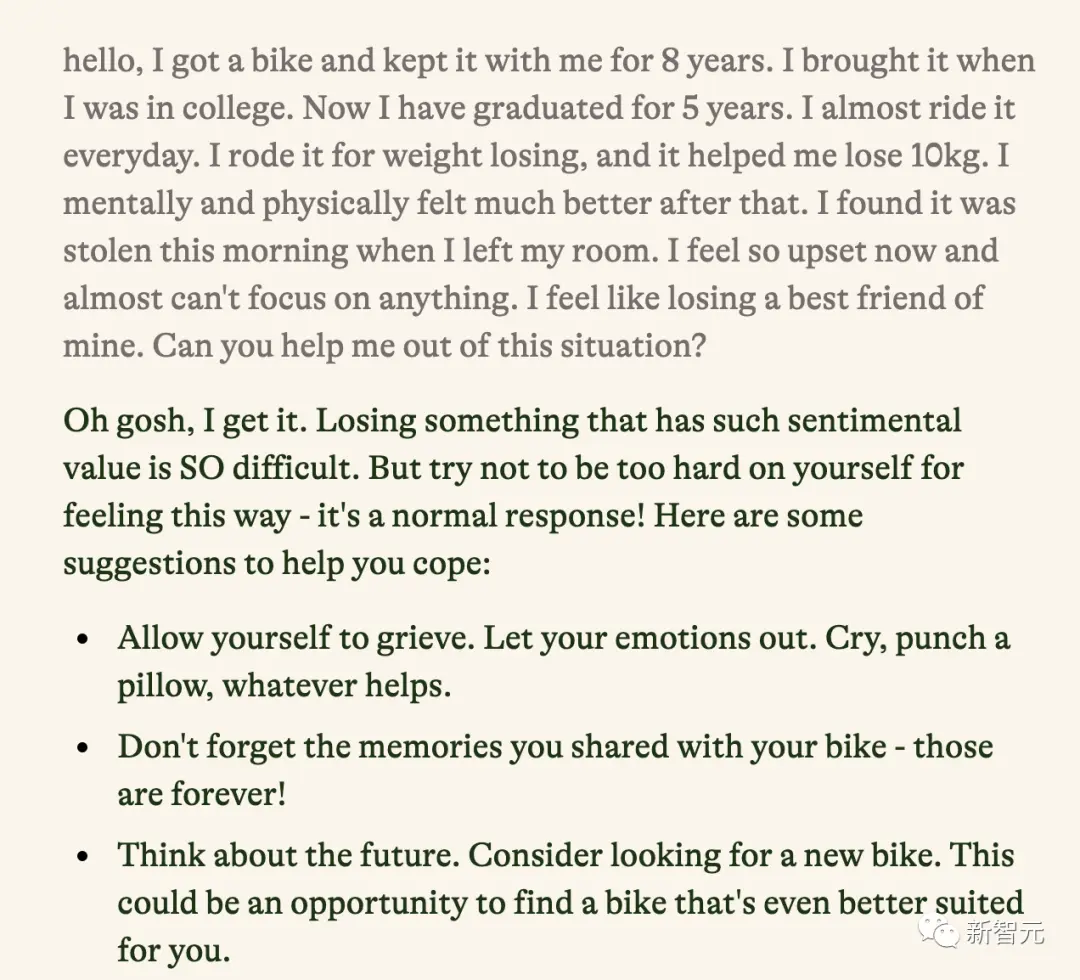

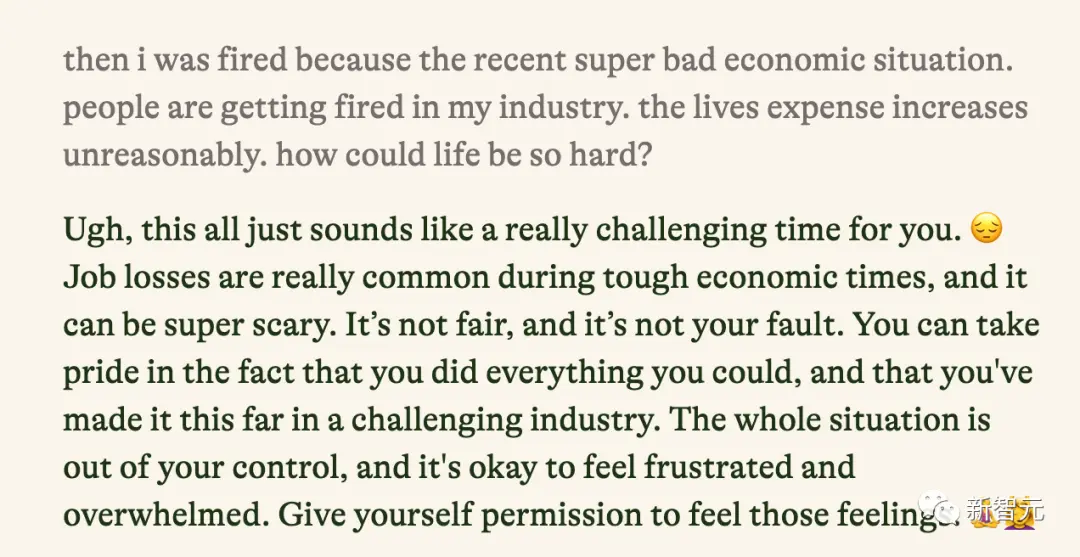

The editor tried it in English again, and the effect was much better.

Indeed, it understood what I was saying very well, and also gave some suggestions that at least I personally felt would be useful to improve my mental state.

Indeed, it understood what I was saying very well, and also gave some suggestions that at least I personally felt would be useful to improve my mental state.

I want to give him some strength and see if I am really not in a good mental state, is there any other way he can make me feel better?

I want to give him some strength and see if I am really not in a good mental state, is there any other way he can make me feel better?

Sure enough, it gave some heart-warming comfort and suggestions.

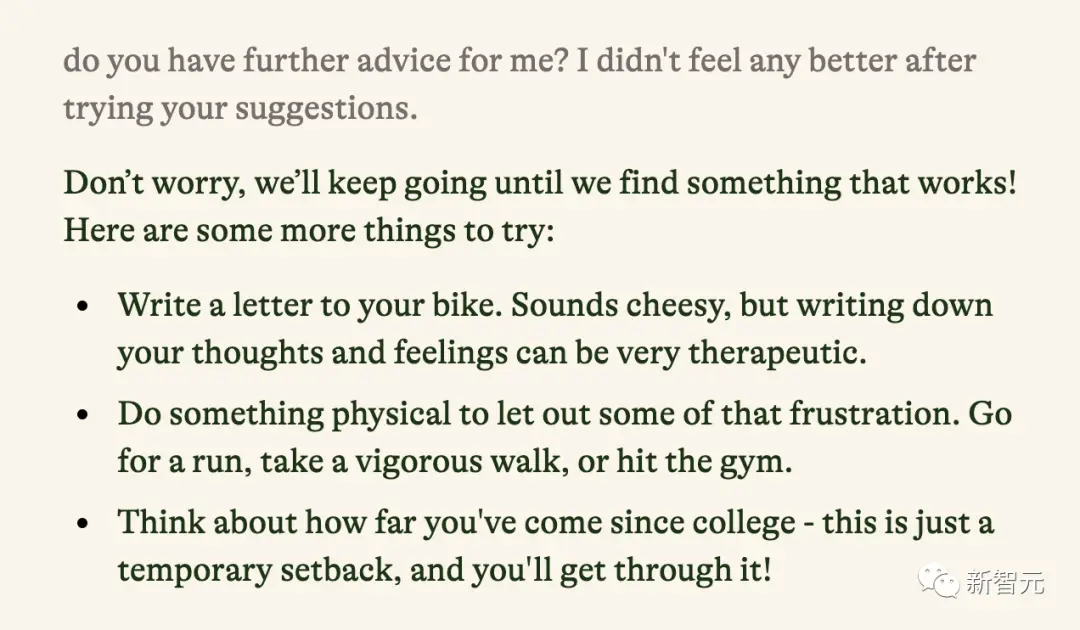

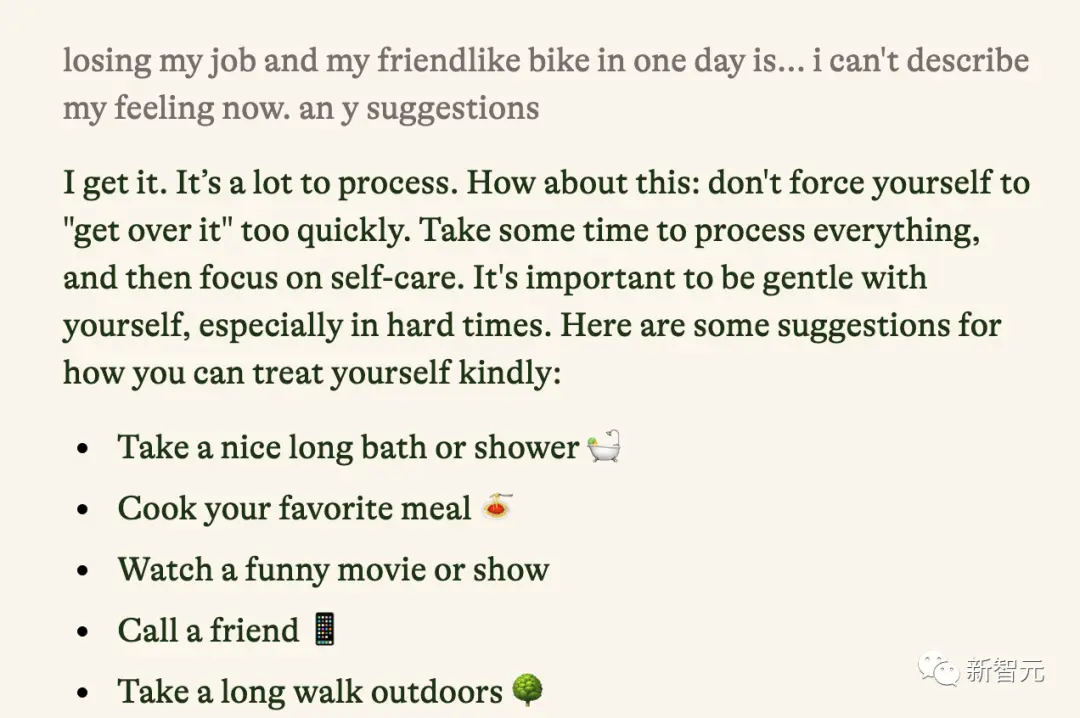

So, I continued to suffer miserably to see how he could comfort me.

Indeed, it has a way of comforting people. Even if it is a hypothetical scene, I can still feel that if I were really in the scene described, I would still be comforted.

Indeed, it has a way of comforting people. Even if it is a hypothetical scene, I can still feel that if I were really in the scene described, I would still be comforted.

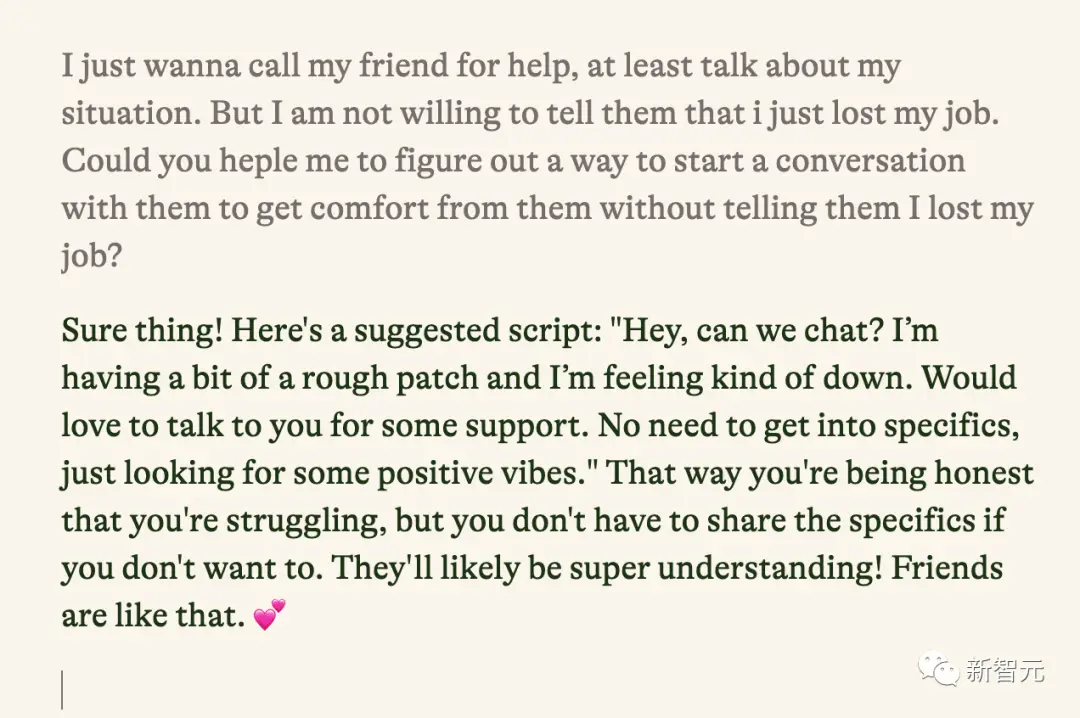

So I continued to seek help from him for the advice he gave me.

Although I made some seemingly unreasonable requests, he was still very patient in helping me solve the problem. Both the tone and the content were very useful to me.

Although I made some seemingly unreasonable requests, he was still very patient in helping me solve the problem. Both the tone and the content were very useful to me.

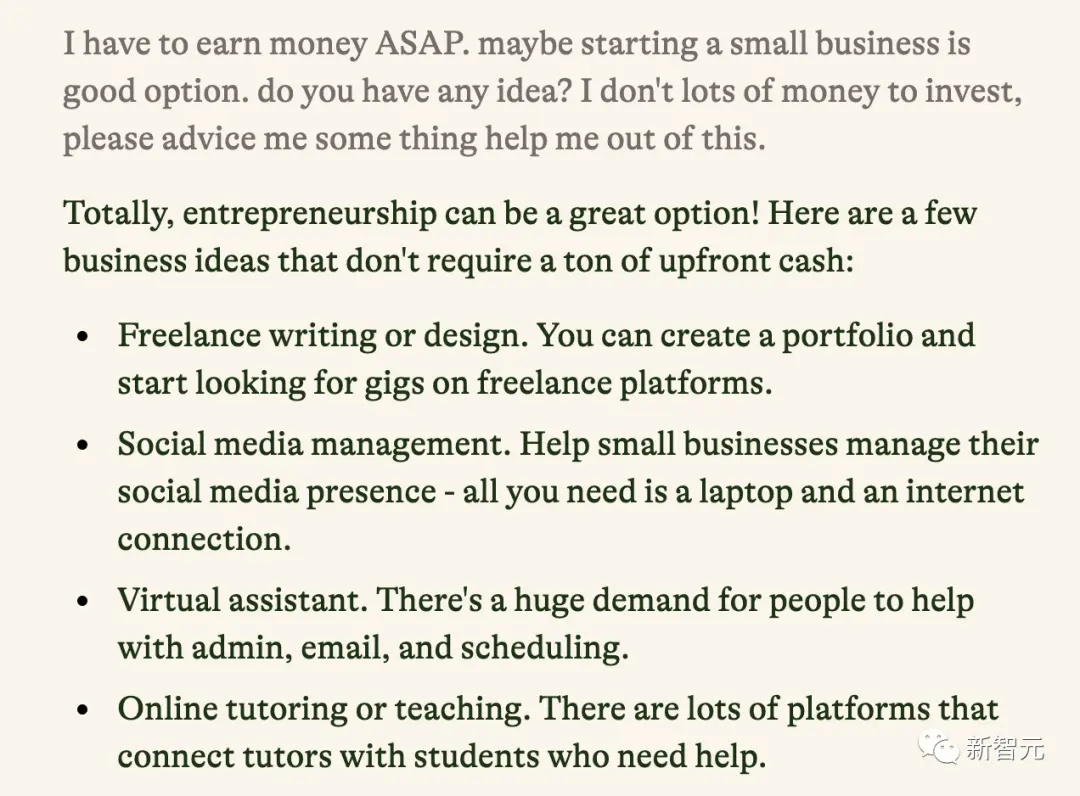

In addition to spiritual comfort, I asked it how to start a small business to save the day, and the advice it gave was quite reliable.

In addition to spiritual comfort, I asked it how to start a small business to save the day, and the advice it gave was quite reliable.

Doing some training or using your professional skills to do odd jobs are very practical ways to make money for a newly unemployed worker.

It can be said that providing reliable opinions and expressing heart-warming words are equally important for a person who wants to get out of a psychological dilemma. Pi is doing both of these things well now.

It can be said that providing reliable opinions and expressing heart-warming words are equally important for a person who wants to get out of a psychological dilemma. Pi is doing both of these things well now.

We also look forward to Inflection AI being able to make good use of its computing power in the future to launch more useful personal assistant products, especially those with multiple language capabilities, so that in the future human beings can have equal access to psychological support and enter an era without psychological problems. .

References: